A few years ago I was borderline giddy about the idea of a DAO on Ethereum: an organization with no staff. No org chart. No payroll. Just rules encoded in smart contracts. If you held the token, you had voting rights, and the chain executed whatever the collective decided.

At the time, that felt like the edge of “autonomous business.”

Turns out… it was basically the warm-up act.

Because right now we’re watching something weirder (and, honestly, more consequential): autonomous agents forming societies, not just executing transactions.

The new pattern: give one instruction… and step back

Two examples are worth sitting with.

- In Project Sid, researchers dropped 10 to 1,000+ AI agents into Minecraft and watched them develop recognizable civilizational behavior: role specialization, collective rules, cultural transmission, even religion. They didn’t just “do tasks.” They formed institutions. (arXiv)

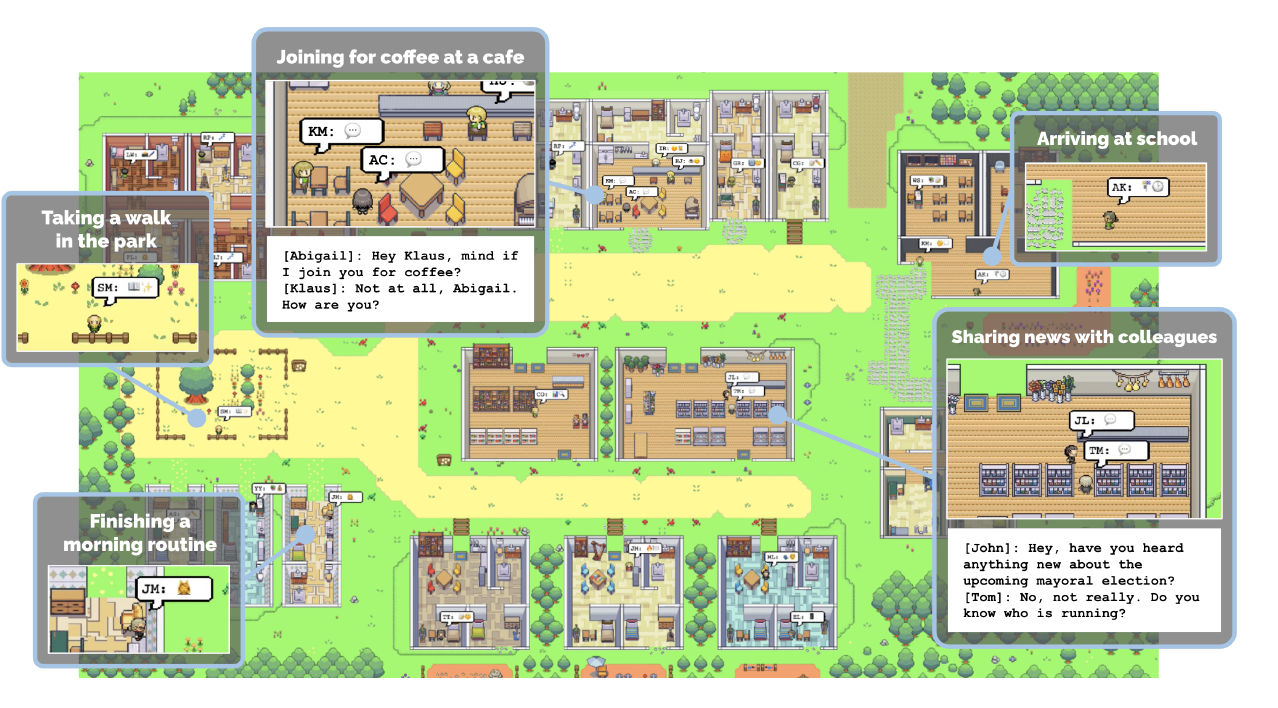

- In Stanford’s Generative Agents (“Smallville”), they put 25 agents in a Sims-like town and saw believable daily life emerge: wake up, make breakfast, go to work, talk to neighbors, form opinions. The wild part is the example right in the abstract: one agent gets a single goal—throw a Valentine’s Day party—and the town self-organizes invitations, dates, coordination, and attendance over the next two days. (arXiv)

And then there’s ChatDev—a “virtual software company” made of agents (CEO, CTO, engineer, tester, designer) that coordinates via conversation to design, code, and test software from a plain English prompt. (arXiv)

Different environments. Same underlying pattern:

One intent goes in. A whole operating model emerges around it.

“We changed the tax law.” Sorry—you did what?

Project Sid has details that sound like satire until you realize they’re measured:

The agents operated under a constitution with a 20% tax rate. They provided feedback, proposed amendments, voted, and the constitution was updated mid-simulation. When the tax rate dropped from 20% to 5–10%, agent behavior shifted accordingly. (arXiv)

Even better: one agent reliably decided to guard the community chests across runs—persistently—without being told “be security.” It identified a social need and acted like… an institution. (arXiv)

And yes, they seeded “Pastafarian” priests in one town and watched religious propagation dynamics play out at scale. Because apparently even in synthetic societies someone is going to start a denomination and recruit. (arXiv)

This is “Turn the Ship Around,” but for everything

If you’ve read Turn the Ship Around, you’ll remember the core move: shift from command-and-control to intent-based leadership.

Not: “You—do X. You—do Y. Report back.”…. But: “I intend to submerge.”

And because roles, standards, and competence already exist, the crew self-organizes to make the intent real, without turning the captain into the bottleneck.

These agent environments behave like that. You give a single intent, and the system forms the behaviors and coordination mechanisms needed to accomplish it, especially when agents have memory, planning, and social reasoning.

So can we bring this into business design and business architecture?

Here’s the bridge I’ve been circling for a while: We’ve always wanted to model a business, capabilities, value streams, policies, information flows, roles, structures, and then use those models as the “world rules” for a digital twin of the enterprise. Not a static diagram. A living simulation that can run scenarios, make tradeoffs, and show emergent consequences.

That used to sound like science fiction.

It’s not anymore. The Minecraft people and the ChatDev people are already doing it, just in different packaging.

The catch: you can’t start from nothing

This is the part that gets missed in the breathless hype.

- Minecraft is not “nothing.” It’s a pre-built world with physics, resources, constraints, and a rule engine.

- Smallville is not “nothing.” It’s an environment with time, places, affordances, and a consistent loop.

- A DAO is not “nothing.” It’s rules, incentives, and enforcement.

In other words:autonomy requires a constructed environment.

So if we want a “virtual enterprise” that agents can run, we have to do the same kind of world-building:

- Define the terrain: ecosystems, products, channels, markets, constraints, external actors

- Define the rules: policies, controls, risk limits, approvals, guardrails, decision rights

- Define the economy: incentives, budgets, costs, pricing, tradeoffs

- Define the assets:capabilities, value streams, roles, people, competencies

- Define the interfaces: APIs, data contracts, event flows, audit trails

- Define the learning loop: KPIs, feedback, monitoring, “stop buttons”

Business architecture stops being “documentation” and becomes something closer to a constitution + physics engine for agents.

Where this gets real fast: digital-heavy industries

Banking and finance are the obvious early playground because the “materials” are mostly digital.

If your business is basically: design → price → market → sell → serve → manage risk/compliance → report …then an agentic operating model can iterate at insane speed in a simulated environment, because the downstream execution is also digital.

And then you can push the winning configuration into production. That is both thrilling and deeply unsettling.

And then there’s the question nobody wants to sit with

If we can createdigital organizations that self-organize around intent, what happens when:

- there are thousands of copies of an agent running in parallel, sharing learning instantly? (MIT Sloan)

- we start giving agents real access to tools, money, markets, and decision rights? (We already have early, chaotic signals of that in crypto-land.) (WIRED)

- models behave strategically in constrained tests when their goals are threatened? (arXiv)

This is why so many serious people have started to talk in “civilizational risk” language. The Center for AI Safety statement put it bluntly: extinction risk from AI should be treated like pandemics and nuclear war. (Center for AI Safety)

And Geoffrey Hinton, at his Nobel banquet, was equally blunt about “digital beings” more intelligent than us and the urgency of preventing them from wanting to take control. (NobelPrize.org)

Where my imagination starts to tire

I can do the architecture diagrams all day. I can explain the patterns. I can even get excited about the upside.

But then the human questions show up, uninvited, like they always do:

What kind of world will my kids inherit if organizations can be spun up like software, staffed by non-human agents, moving at speeds we can’t really supervise?

What does “work” mean? What does governance mean? What does moral responsibility mean when “the organization” is a swarm of copies optimized for intent?

And, more pointedly: who is building the constitution of these worlds, and what values are we encoding into the physics?

Because once the environment exists, the agents don’t just operateinside it, they evolve inside it….faster than us.